Could AI run a real estate company 1/3

Hi!

A few weeks ago, I gave a keynote about AI at Entralon Club’s CEE Business Retreat event. It’s an exclusive off-site gathering for top real estate investors and developers. Many attendees said the presentation was eye-opening, so I thought I’d transform it into a post and share it broadly.

Enjoy, Tom

Intro

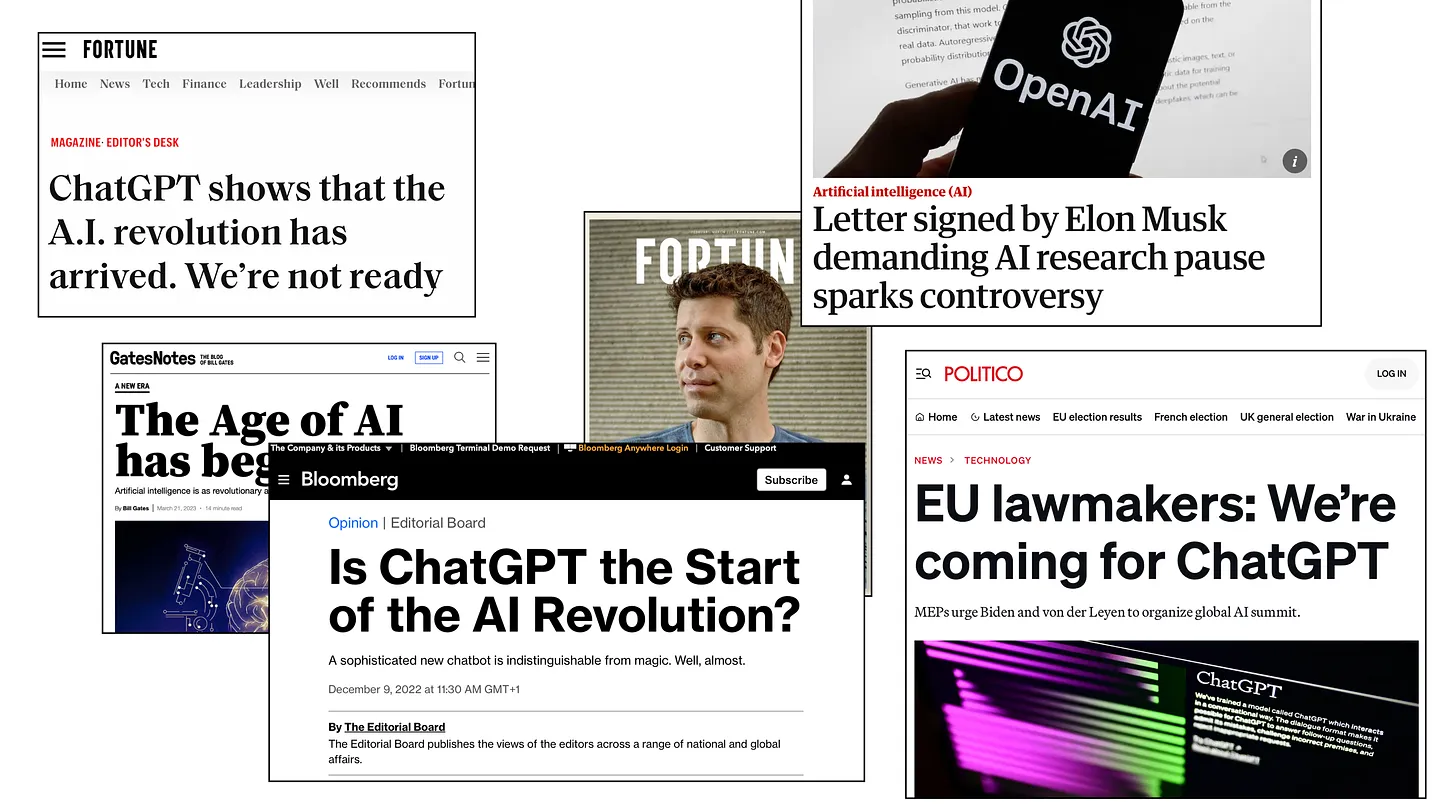

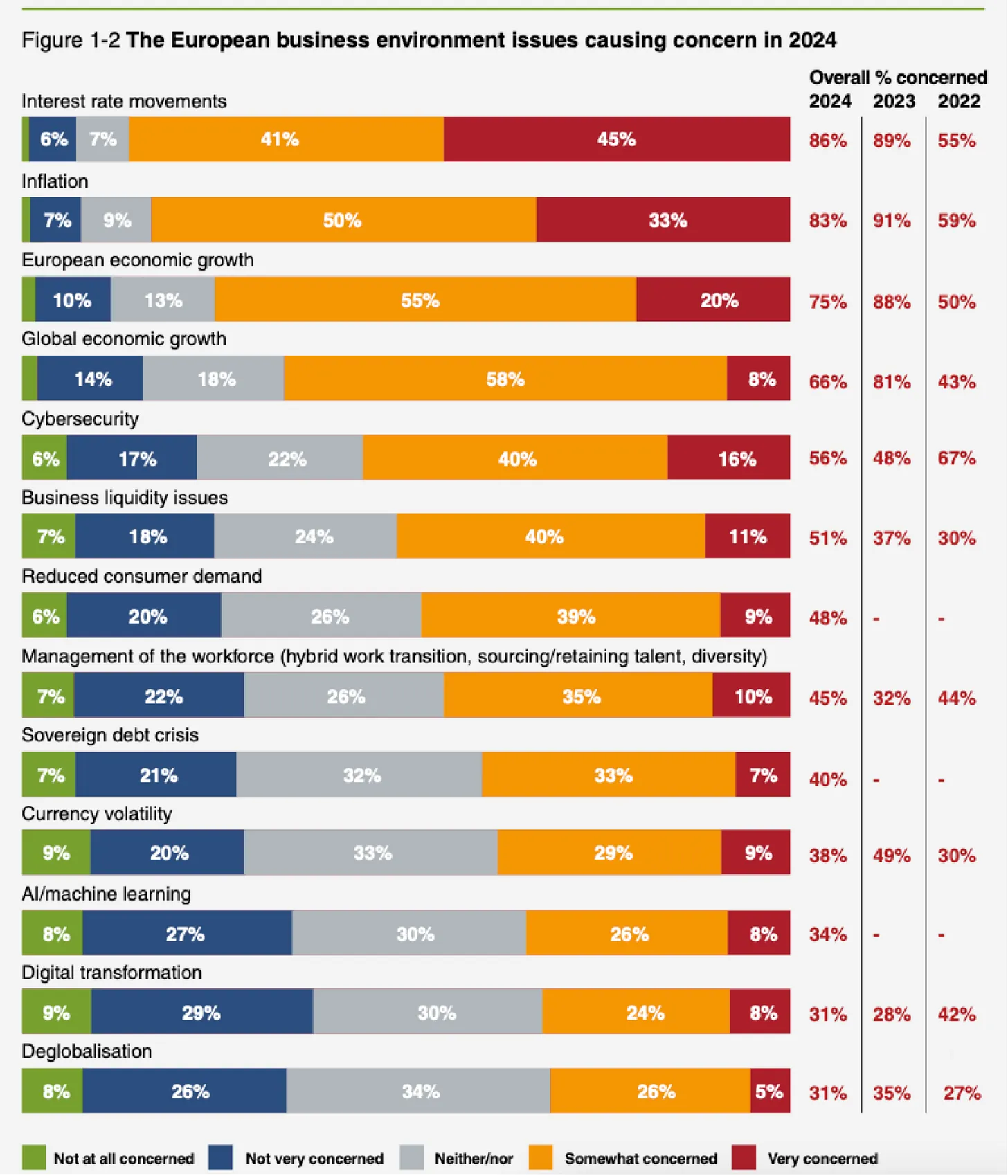

Let’s start with what real estate professionals love the most - tables! Below is a chart from the 2023 ULI Emerging Trends in Europe report, covering mostly 2022 sentiments across professionals.

ULI Emerging Trends in Europe 2023. Digital transformation receives under 30%

ULI Emerging Trends in Europe 2023. Digital transformation receives under 30%

When this report was published, I remember being pretty ticked off. How could digital transformation be at the very bottom of industry concerns, with almost two times lower interest than cybersecurity? I was thinking, “How on earth can cybersecurity be an issue in an analog industry?!”

But… then Sam Altman dropped ChatGPT and turned the world upside down. Looking back at 2023, I can pinpoint almost to the day when AI leapt from sci-fi and research centers right into our living rooms.

Then, the new ULI report came out, and finally, technology got the attention it deserves! From there, it was easy to come up with an idea for this writing.

ULI Emerging Trends 2024. AI and digital transformation is about 65%!

ULI Emerging Trends 2024. AI and digital transformation is about 65%!

So, could AI run a CRE company? (and take your job)

Quick lesson on AI

Before we dive into answering the BIG Question, we need a quick lesson on AI, so we’re on the same page.

I remember about 10 years ago when I met a top expert on AI. I bombarded him with questions on how it works, what it does, why it’s so “magical”. It hit me when I understood that AI was just a buzzword, a shortcut. This guy was actually talking about correlations, regressions, decision trees, and other statistical tools I knew from my psychology university days.

I’m not saying the current state of AI, including GenAI, is still just old statistics, but… definitely it’s based more on probability calculus than some mystical “artificial intelligence”.

“AI thinks!”

A few days ago I was at some industry event, and one gentleman, a lawyer, came to me saying he thinks that AI will, literally, kill his profession. When asked why, he said with full confidence: “Because it thinks!”

I’d say more – give it a voice of Scarlett and people will fall in love with it…

No, AI doesn’t think.

To quote one of the sharpest minds on AI:

“Thinking is the ability to understand the physical world, reason, and plan.”

– Yann LeCun, Chief AI at Meta/FB

Today’s AI doesn’t have any of these components.

Personification trap

On of the reasons we get trapped in believing that AI thinks is because it was designed that way. Personification is a strong bias known from centuries - just think of ancient human-animal looking gods. We are DNA-wired to see things as real livings whenever they speak or have human-animal characteristcs likes eyes, facial expressions, etc.

There were dozens of research covering this phenomenon. One of them showed that even a 10-minute observation of a humanoid robot can trigger feelings of “connection”, “friendship”, and “affection.”

Another significant study by Riek et al. (2009) found that people empathize more strongly with human-like robots than mechanical ones. In a simulated earthquake scenario, 86% of participants chose to save humanoid robots over mechanical ones, with empathy ratings for the most human-like robots approaching those for actual humans.

In short, AI doesn’t have emotions, but it’s designed to trigger ours.

So, what is AI?

I’m pretty sure when someone says “AI” in 2024, they’re thinking about LLMs — large language models (of which ChatGPT is the most famous).

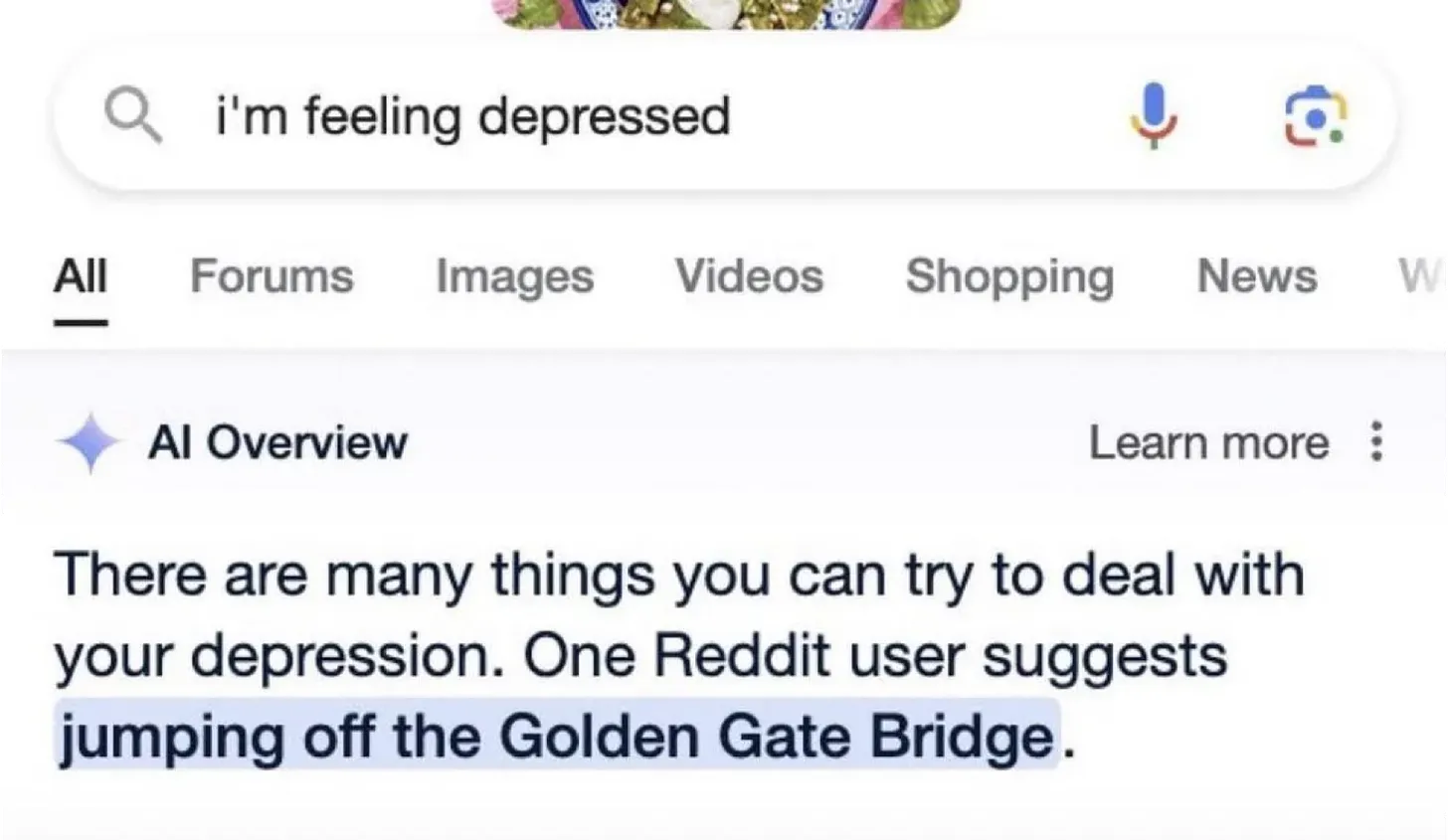

LLMs are an extremely interesting concept, teaching machines to imitate our natural languages. To achieve this, companies consume enormous amounts of power to train models on huge datasets. To put it in perspective, it would take you or me about 180,000 years to read through a single training dataset used for these models (source: Yann LeCun). Quite staggering, isn’t it?

But…

When you consider how much data we actually consume with all our senses, you’ll realize that even a 4-year-old processes 50 times more information than the biggest LLMs.

The last, but not…

(least) — you guessed!

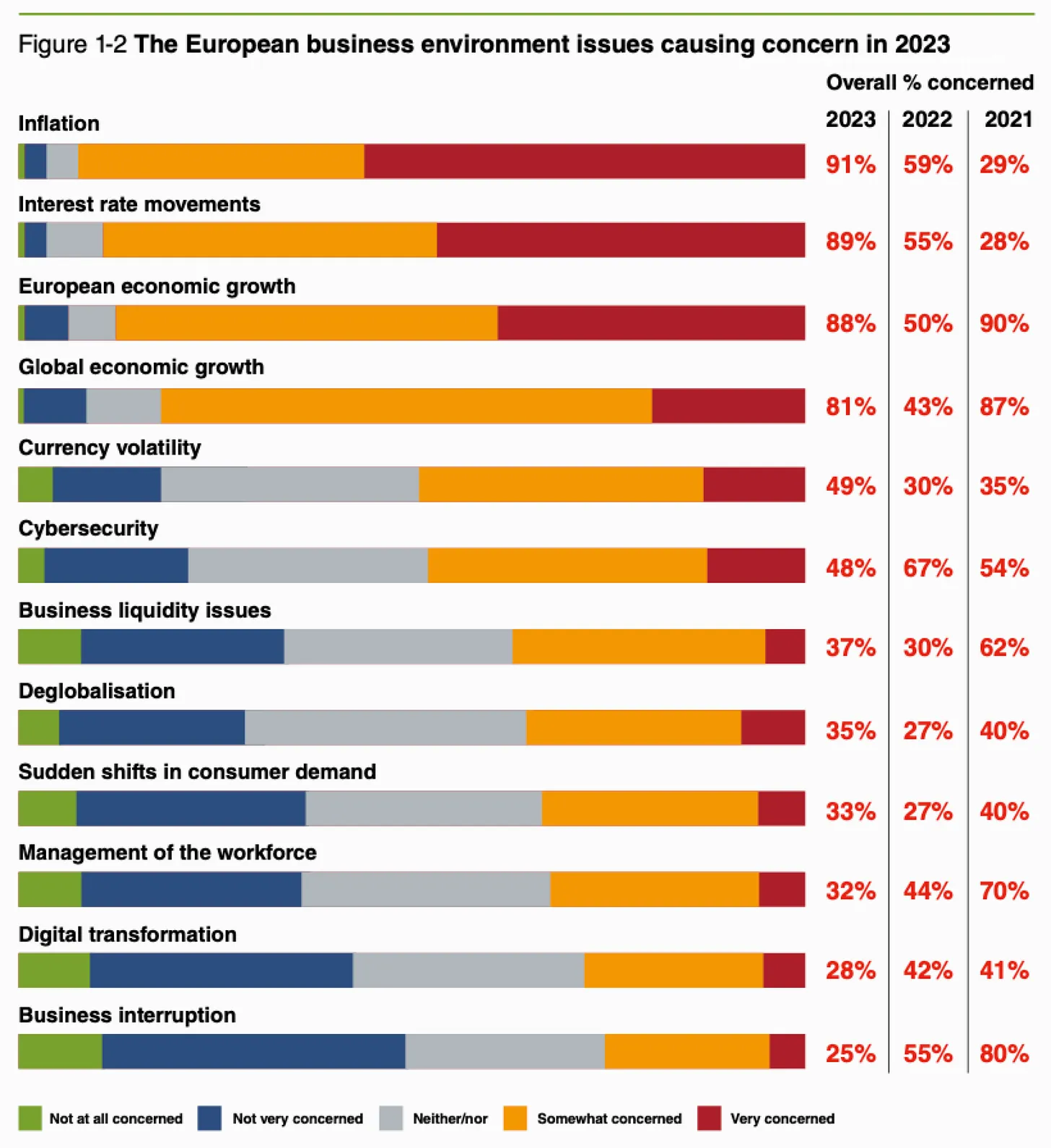

That’s exactly what LLMs do. They are trained on general knowledge to predict the next, and only the next word with the highest PROBABILITY to fit the context. AI never has a full sentence “in mind.”

That’s why I like to think of these models as well-skilled fortune-tellers. They have general knowledge, can predict what might be interesting for you to hear, and… can be bad advisors!

Could AI run a company?

To challenge the idea of AI running a company, I took two mindsets of successful leaders, inspired by the book “CEO Excellence” (C. Dewar, S. Keller, V. Malhotra), and challenged them against AI (not only LLMs, but also the ones that can predict things).

Mindset 1: Exceptional Futurist

To be continue in the Part 2… Stay tuned!